face_with_colon_three circa 2016.

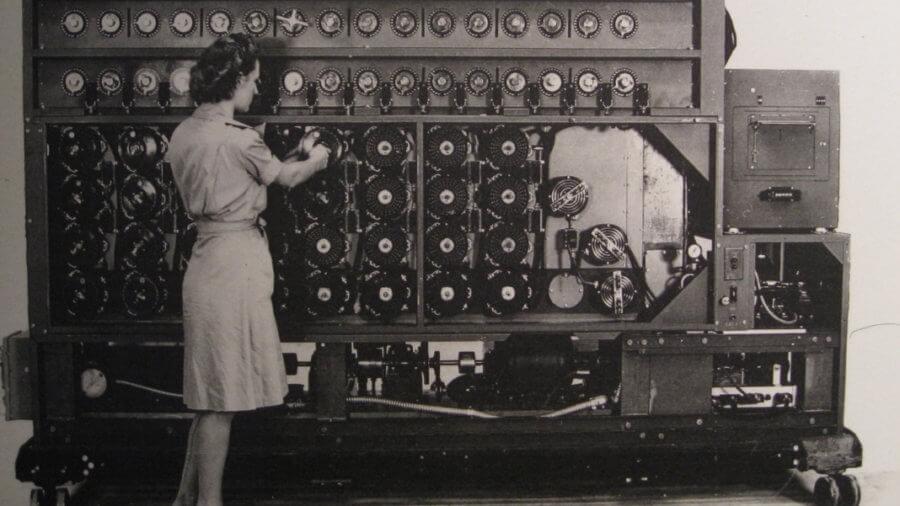

Two basic types of encryption schemes are used on the internet today. One, known as symmetric-key cryptography, follows the same pattern that people have been using to send secret messages for thousands of years. If Alice wants to send Bob a secret message, they start by getting together somewhere they can’t be overheard and agree on a secret key; later, when they are separated, they can use this key to send messages that Eve the eavesdropper can’t understand even if she overhears them. This is the sort of encryption used when you set up an online account with your neighborhood bank; you and your bank already know private information about each other, and use that information to set up a secret password to protect your messages.

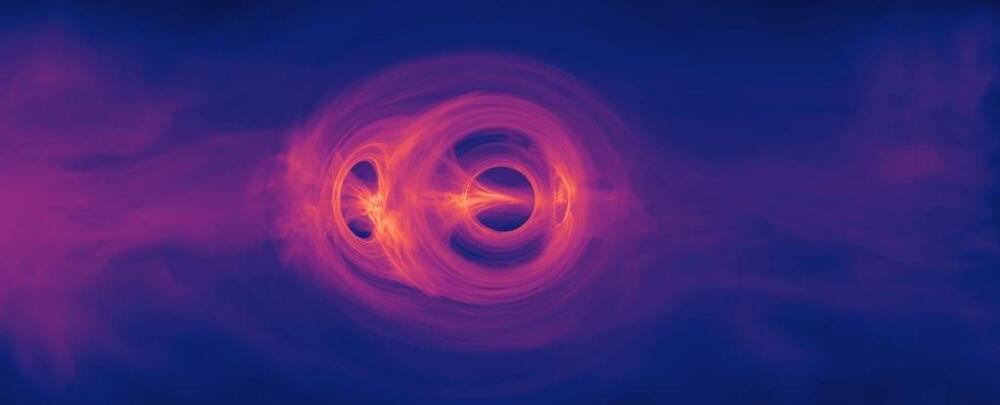

The second scheme is called public-key cryptography, and it was invented only in the 1970s. As the name suggests, these are systems where Alice and Bob agree on their key, or part of it, by exchanging only public information. This is incredibly useful in modern electronic commerce: if you want to send your credit card number safely over the internet to Amazon, for instance, you don’t want to have to drive to their headquarters to have a secret meeting first. Public-key systems rely on the fact that some mathematical processes seem to be easy to do, but difficult to undo. For example, for Alice to take two large whole numbers and multiply them is relatively easy; for Eve to take the result and recover the original numbers seems much harder.

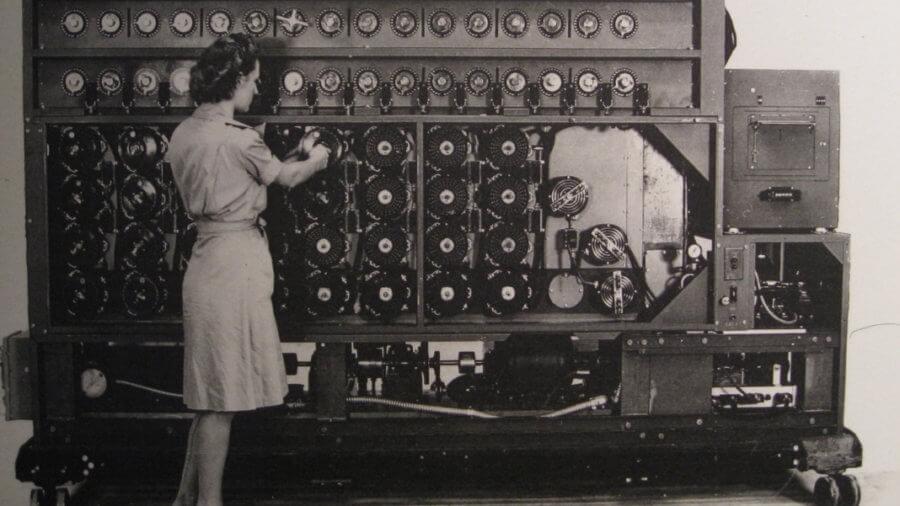

Public-key cryptography was invented by researchers at the Government Communications Headquarters (GCHQ) — the British equivalent (more or less) of the US National Security Agency (NSA) — who wanted to protect communications between a large number of people in a security organization. Their work was classified, and the British government neither used it nor allowed it to be released to the public. The idea of electronic commerce apparently never occurred to them. A few years later, academic researchers at Stanford and MIT rediscovered public-key systems. This time they were thinking about the benefits that widespread cryptography could bring to everyday people, not least the ability to do business over computers.